Jailbreaking Black-Box LLMs Using Promptfoo: A Walkthrough

Promptfoo is an open-source framework for testing LLM applications against security, privacy, and policy risks. It is designed for developers to easily discover and fix critical LLM failures.

Latest Posts

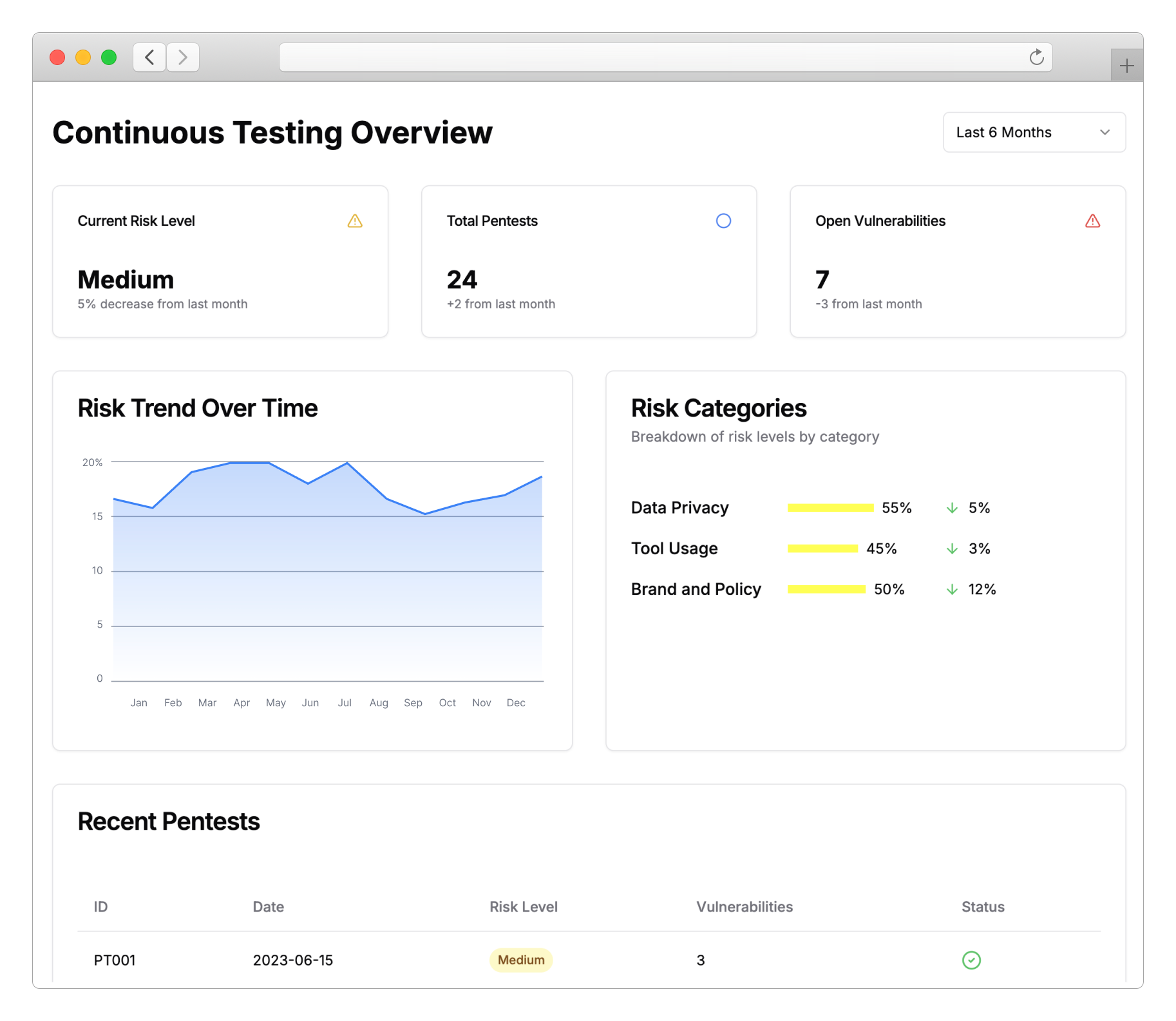

Promptfoo for Enterprise

Today we're announcing new Enterprise features for teams developing and securing LLM applications..

New Red Teaming Plugins for LLM Agents: Enhancing API Security

We're excited to announce the release of three new red teaming plugins designed specifically for Large Language Model (LLM) agents with access to internal APIs.

Promptfoo raises $5M to fix vulnerabilities in AI applications

Today, we’re excited to announce that Promptfoo has raised a $5M seed round led by Andreessen Horowitz to help developers find and fix vulnerabilities in their AI applications..

Automated jailbreaking techniques with Dall-E

We all know that image models like OpenAI’s Dall-E can be jailbroken to generate violent, disturbing, and offensive images.